The case is one of several recent episodes in which fraudsters are believed to have used deepfake technology to modify publicly available video and other footage to cheat people out of money. As with many technologies, synthetic media techniques can be used for both positive and malicious purposes. But what is deepfake, and how can we protect against malicious actors using it?

How do you detect a deepfake?

Ali Niknam, CEO of Bunq Bank, recently shared an alarming incident that underscores the threat posed by deepfakes to many Dutch companies. In a LinkedIn post, Niknam disclosed that a Bunq employee received a convincing video call purportedly from him, requesting a substantial fund transfer. However, it was later discovered that the video was a deepfake.

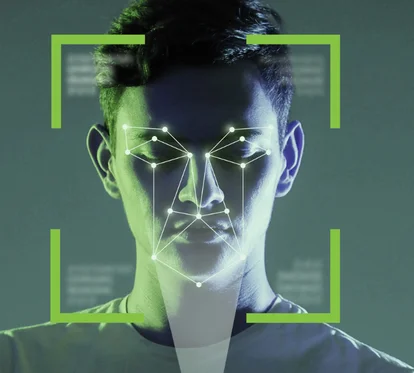

What is Deepfake?

Synthetic media threats broadly exist across technologies associated with the use of text, video, audio, and images which are used for a variety of purposes online and in conjunction with communications of all types.

Deepfakes are a particularly concerning type of synthetic media that utilizes artificial intelligence/machine learning (AI/ML) to create believable and highly realistic media. The most substantial threats from the abuse of synthetic media include techniques that threaten an organization’s brand, impersonate leaders and financial officers, and use fraudulent communications to enable access to an organization’s networks, communications, and sensitive information.

Impact of Deepfakes: understanding for a secure digital future

Understanding Deepfakes is crucial, with significant implications in society, politics, cybersecurity, and the manipulation of public trust.

Deepfakes can distort reality, creating highly realistic video or audio content that can be hard to distinguish from authentic materials. This can undermine trust in the media and the information we rely on to make informed decisions. In politics and other domains, Deepfakes can manipulate public opinion, discredit opponents, sow confusion, and fuel conspiracy theories. The immoral use of Deepfake technology, for creating compromising materials or online harassment, raises serious issues of ethics and respect for individual rights.

As technology continues to advance, Deepfakes are likely to become increasingly sophisticated and harder to detect. Understanding how they work, and discovering ways to identify them is essential for developing technologies and strategies to counter their malicious use. Lastly, raising the public awareness about the existence and capabilities of Deepfakes can help mitigate their impact by fostering healthy skepticism toward dubious content and encouraging information verification from multiple sources.

For all these reasons, it's important to develop a collective understanding of Deepfakes and their potential to affect society. This will enable us to navigate an increasingly complex digital world with discernment and greater caution.

Tools and techniques for detecting Deepfakes

Detecting Deepfakes is an ever-evolving challenge as the AI technologies underlying their creation become increasingly sophisticated. In response, researchers and developers are working on developing new tools and techniques to identify these falsifications. Here are some of the most promising approaches in Deepfake detection:

Basic techniques for detecting Deepfakes

- Don't believe everything you see online: the internet is a vast source of information, but not all of it is true. It's important to develop healthy skepticism and carefully analyze any video or photo content before accepting it as real.

- Look for signs of manipulation: Deepfakes can be very sophisticated, but they can often be identified by certain clues. Pay attention to lighting discrepancies, alignment errors, skin irregularities, or lip-syncing issues.

- Check the source: where does the video or image come from? Is it being distributed on a trusted platform? Look for confirmation from credible sources or directly from the entities or individuals involved.

- Use verification tools: there are numerous organizations and online tools that can help you verify if the information is real. Use them to investigate the authenticity of suspicious content.

- Don't rely on a single source: seek confirmation from multiple credible sources. One video or image is not enough to verify information.

- Learn about Deepfakes: the better you understand how this technology works, the better you'll be able to identify fakes. There are many online resources that explain the principles of Deepfakes and detection methods.

Advanced techniques for detecting Deepfakes

- Behavioral analysis: this method relies on identifying small imperfections or anomalies in the subject's behavior or physical movements in the video clip.

- Consistency of lighting: detecting inconsistencies in lighting is another effective technique. Algorithms analyze shadows, reflections, and how light reflects on different surfaces of the face to determine if the image has been manipulated.

- Skin texture analysis: Deepfake techniques often smooth out skin texture or introduce anomalies in the texture. Detecting these changes, which are often subtle and difficult to spot with the naked eye, can help identify manipulations.

- Compression artifact detection: AI-manipulated videos and images may exhibit unique compression artifacts due to the generation and compression process. Analyzing these artifacts can provide clues that the material has been altered.

- Metadata examination: although Deepfakes themselves can be convincing, the metadata associated with a video or image file (such as creation date, camera type, etc.) may be contradictory or suspicious, suggesting manipulation.

- Verification of breathing and pulse consistency: some advanced techniques include analyzing minor variations in facial color or shadows, which can indicate heartbeats and breathing. Changes in these patterns can indicate the presence of a Deepfake.

- Challenges and limitations: it is important to note that as detection technologies become more sophisticated, so do the methods of creating Deepfakes. This means that constant updating, and improvement of detection tools are needed. Additionally, many of the techniques can generate false positive or false negative responses, requiring further verification and continuous improvement of algorithms.

Detecting Deepfakes is a dynamic battlefield between creators of falsified content and those trying to protect the authenticity of information. Continuing research and development in this field is crucial to keeping pace with the rapid evolution of technology.